Recent advances in computing power and generative algorithms have led to enormous advances in the field of AI-generated counterfactuals (also known as deepfakes or synthetic data) that convince by their high level of realism. One of the promising fields of application for such realistic AI-generated data is in the field of transparency with regard to black boxed automated decision making (ADM) systems.

An article by Katja de Vries

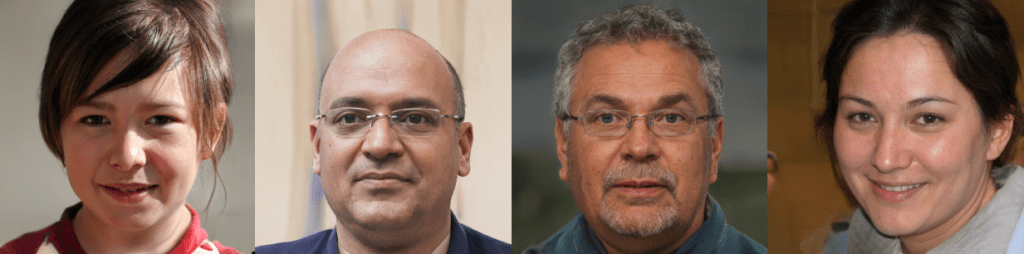

Figure 1. Faces of people that do not exist. Generated on Jan. 30, 2020 at https://www.thispersondoesnotexist.com/. Underlying model: StyleGAN, NVIDIA, public release December 2019. The images are created without human intervention and not copyright protected. See Tero Karras et al., Progressive Growing of GANs for Improved Quality, Stability, and Variation, International Conference on Learning Representations (ICLR) (2018).

A ray of light in the algorithmic black box?

In all spheres of life decision making is increasingly supported or outsourced to algorithmic systems. The logic of such automated decision making (ADM) systems is often black boxed. The top three reasons for such black boxing are, firstly, the use of algorithms that are so complex that they are not easily interpretable or explainable. The second reason is the protection of an algorithm as a trade secret in a commercial setting where the ADM gives a competitive edge. Another important reason for black boxing is to prevent gaming and manipulation of the ADM system. Black boxed ADM systems can easily lead to “Computer says no”-situations, sometimes with dramatic consequences for the lives of affected individuals. Transparency tools are often hailed as the panacea against the ailments of black boxed ADM systems. Yet, transparency is an empty shell if the existing power structures prevent individuals from acting on it. A cleansing ray of light does not automatically result in accountability or fair systems. The problem with transparency can be subdivided in three parts. Firstly, it is far from obvious which part of ADM systems has to be unveiled to realize “true” transparency. Is it the source code? The data on which the algorithm was trained? Highly detailed and technical information might be too complex to grasp and not very enlightening about individual decisions. Should layman’s summaries of the inner workings be preferred? Here the risk is that explanations become so general that they are teethless and equally unhelpful in understanding why a decision was reached in a particular case. Another problem with transparency is that perception does not equal understanding. Thirdly, neither perception nor understanding automatically results in empowerment of the subjects of ADM systems. In fact, it turns out that interpretability and transparency tools can give a false sense of reliability to ADM systems, resulting in disempowerment.

Human ways of explaining: individually empowering transparency

If a bank denies you a loan based on an algorithmic assessment, you are probably most helped by a local explanation – why did the ADM classify you as an unsuitable debtor? – that also recommends you some reasonable steps you could take to reach a more favorable outcome. This is where “What if….?”-explanations come into the picture. Such explanations are both intuitive and counterintuitive as a transparency tool. Counterfactuals are counterintuitive because transparency is a notion that is deeply tied up with the idea that some real truth has to be unveiled, which is in direct opposition to speculative phantasies. What if Hitler had been shot in 1944? What if Germany had won the Second World War? Such thought experiments can be an excellent point of departure for historic science-fiction, but are not easily squared with a traditional notion of transparency. At the same time counterfactual explanations have an enormous intuitive appeal because they mimic explanations that humans use in their day-to-day interactions. For example, when students challenge the grading decisions that I take as a university teacher, fortunately nobody has ever asked for a brain scan or a full record of all other exams that I have graded in my life and that might have shaped my assessment. Students often want to know how they could have done better—it is the counterfactual that matters more than the factual. Empowerment and accountability are deeply connected to the possibility of imagining things differently, and to be able to come up with parallel histories and realities. The shift from the factual (“How is this really working?”) to the counterfactual (“What would happen if . . .?” and “How could it work differently?”) is important for creating actionable transparency at an individual level.

Calculemus!

Actionable counterfactual explanations have to be the nearest alternative reality where things would have turned out differently. They look at the least amount of change needed to make a algorithmic model classify input differently. Let’s imagine a forum for online sellers that employs an ADM tool to automatically banish potentially fraudulent sellers. To avoid “computer says no”-situations banished sellers could be given a counterfactual as an explanation for the banishment decision: the hypothetical nearest datapoint that would not be classified as fraudulent by the machine learning (ML) system. By comparing oneself with one’s hypothetic (or “synthetic”) alter-ego one could gain insight into the reasons underlying the banishment. As Hernandez writes: “[T]he ways in which the seller most differs from its neighbors constitute the most likely reasons for the decision.”

Counterfactuals explanations thus become conceived as an optimization problem which can be formulated and solved in mathematical terms. The possibility to frame the construction of counterfactuals explanations, first proposed in 2018 by Sandra Wachter e.a., as a mathematical problem has undoubtably contributed to its popularity. It conforms to Leibniz’s Calculemus! (Let us calculate!): the happy belief that any problem can be solved by calculation. Individually empowering information, that does not disclose too much of the underlying the logic, and has the solidity and neutrality of mathematics – what is not to like? However, in the next section I’ll show that the mathematical optimization leading up to a counterfactual is far from neutral.

Limitations

Let’s go back to the case where a seller at an online platform has been classified by ADM as fraudulent. The ideal is that there is one simple nearest hypothetical case that gives the seller unambiguous, actionable insight: “a person who is precisely like you but who does have 10.000 transactions less per day would not be qualified as fraudulent”. However, in reality (not unlike human decisions) ADM decisions are often complex composites of interrelated reasons, and unambiguous explanations are after-constructions and simplifications. The reality is that, as Barocas e.a have argued, that counterfactual explanation are the result of many decisions that, if taken differently, could have led to a different counterfactual. As shown convincingly in the movie Rashomon, different explanations of the same set of events can be equally convincing. This Rashomon effect in counterfactuals means that in reality the banished seller could probably be given many alternative counterfactuals (“somebody who works 40% less during the night”, “somebody who on average gets one more star on reviews”, “somebody who has a bank account in the EU”, “somebody with a different profile picture”, etc.) including complex composites (such as, “have 350 transactions less per day, and have a different gender, and have a bank-account in the EU, and…and..”) which can also be contradicting. The crucial question here is which counterfactual to pick. Dandl and Molnar poignantly summarize the options: “This issue of multiple truths can be addressed either by reporting all counterfactual explanations or by having a criterion to evaluate counterfactuals and select the best one.” Reporting all counterfactuals can lead to confusing information overkill, or to disclosing too much of the underlying model. Making a selection is equally problematic. Which criterion to use for the selection? Should one abstain from reporting counterfactuals with a different gender or ethnicity because this is something that the seller cannot easily change? Or is this a cover up of a discriminatory practice? Equally problematic is the definition of what counts as the “nearest” hypothetical. Is a counterfactual alter-ego that is exactly like the online seller apart for gender, nearer than one that has one additional year of high-school education? Which counterfactual look-a-like is nearer: one with a bank-account a different location, or on that gets one more star in the reviews? Constructing a counterfactual is like a walk through the garden of forking paths. That normative decisions have to be taken is not a problem – as long as they are not shrouded under a cloak of neutrality and objectivity.

Conclusions

There is no doubt that counterfactual explanations are very promising tools to provide transparency about individual decisions made by ADM systems, in that they conform more to how human explanations work: through counterfactuals. Yet the construction of counterfactuals is a normative process and it important that this aspect is not black boxed.

This blogpost builds on a paper to be published in March/April 2021: Katja de Vries (2021), Transparent Dreams (Are Made of This): Counterfactuals as Transparency Tools in ADM, in: Critical Analysis of Law, Vol 8, No 1 (spring 2021, special issue on transparency).

Published under licence CC BY-NC-ND.